AI Stole My Style. What Are My Options?

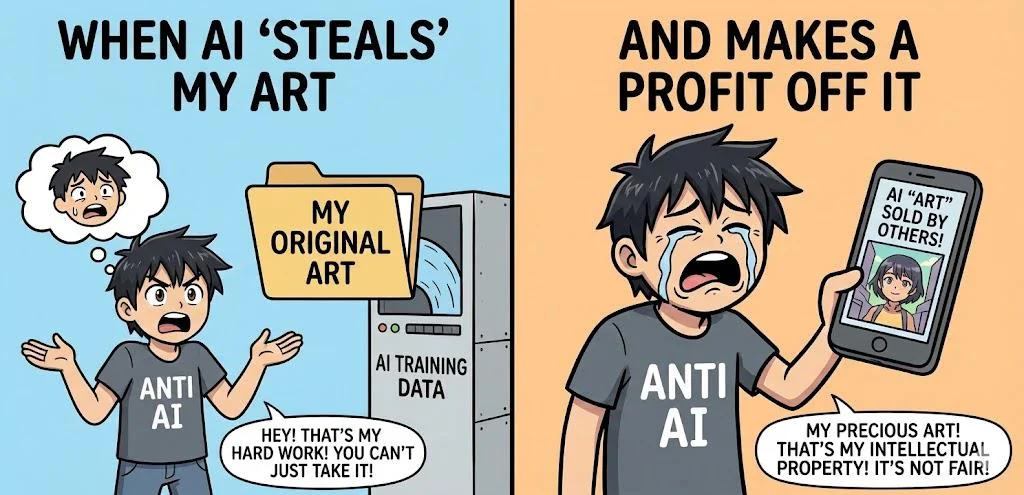

Your aesthetic has been absorbed into a model and is being sold back to the market without your consent. Here is what you can actually do about it.

It usually starts with a notification. Someone tags you in a post — "this looks just like your work!" — and when you click through, you find something that uses your color palette, your compositional style, your linework. The caption says it was made with an AI prompt. Your name might even be in it.

You didn't authorize this. You weren't paid. You might not even have known your work was part of any training dataset.

So what can you do?

Understand What You're Actually Dealing With

First, it helps to separate the different things that might be happening:

Your work was in a training dataset. Services like Have I Been Trained (haveibeentrained.com) let you search for your images in common training datasets like LAION-5B. If your work is there, it was ingested without your explicit consent — though whether that constitutes infringement is still being litigated.

An AI is being prompted with your name. This is separate from whether your work was used in training. Users can prompt "in the style of [your name]" even if your work wasn't directly in the dataset, because style itself isn't protected. However, if the outputs are being sold commercially using your name, right-of-publicity law may apply.

A specific work has been reproduced. If an AI output reproduces substantial elements of a specific piece — not just style, but recognizable visual content — that moves closer to straightforward infringement.

Knowing which situation you're in determines which tools are actually available to you.

Immediate Steps

Document everything. Screenshot the output, the prompt if visible, the platform, the date, and any commercial context. If the work is being sold, screenshot the listing and the price. This documentation is essential for any legal or platform action.

Submit an opt-out request. Spawning's opt-out tool covers several major datasets and is recognized by some AI companies. It won't remove your work from models that have already been trained, but it signals intent and creates a record.

File a DMCA takedown if specific works are reproduced. If you can identify a specific output that reproduces substantial elements of a specific copyrighted work you own, a DMCA notice to the platform hosting it is your most direct tool. Platforms are legally required to respond.

Contact an IP attorney. If you're a working professional whose income is being materially affected, a consultation with an intellectual property attorney costs a few hundred dollars and clarifies what leverage you actually have. Many offer free initial calls.

The Platform Level

Major platforms — Instagram, DeviantArt, ArtStation, Adobe Stock — have updated their policies around AI content. Most now have mechanisms for reporting:

- Content that impersonates your style with your name attached

- Commercial content that misrepresents AI output as your work

- Outputs that reproduce identifiable elements of specific works

Platform enforcement is imperfect and slow, but a formal report creates a record and, in some cases, results in takedowns or account suspensions.

The Legal Landscape as It Stands

The lawsuits are moving. The class action brought by artists against Stability AI, Midjourney, and DeviantArt is proceeding. Getty Images' case against Stability AI in both the US and UK is further along. These cases will establish precedent — but they will take years.

In the meantime, the US Copyright Office has issued guidance that AI-generated content without meaningful human creative input is not copyrightable. This cuts both ways: it weakens AI companies' ability to claim copyright over their outputs, but it doesn't automatically strengthen your hand against style appropriation.

The EU AI Act, now enforceable, requires AI companies to disclose training data. This creates, for the first time, a legal basis for auditing whether your work was used.

What Actually Protects You Going Forward

There is no single action that fully resolves this. Style appropriation at scale is a structural problem, not a one-time incident. But there are positions that are stronger than others:

Establish verifiable provenance for your work. The more clearly documented your creative process is — with timestamped evidence that specific works originated with you — the stronger your position in any dispute about derivative outputs.

Make human origin a feature, not an assumption. Clients, collectors, and platforms that value human creativity are increasingly willing to pay a premium for verified work. Certification turns your humanity into a documentable asset.

Connect with other affected artists. The lawsuits currently moving through courts were filed by artists working together. Collective action has more leverage than individual complaints.

The Honest Prognosis

Style protection is going to remain imperfect. The law wasn't designed for this situation, and retrofitting it is slow.

What's within reach right now is a stronger position: better documentation, clearer provenance, and a verified record of your creative output that gives you something to stand on when your work is contested. That isn't everything. But it's significantly better than nothing.

2026-03-14 · 6 min read

What Happens When AI Impersonates You Online — and How to Fight Back

AI-generated content is being posted under real artists' names, passed off as their work, and used to sell products they never endorsed. Here is what your options are.

2026-03-12 · 5 min read

How to Build a Portfolio That Proves Human Creativity

A portfolio used to be a showcase of finished work. In 2026, it needs to do more — it needs to demonstrate that a human made it. Here is how to build one that does both.

2026-03-10 · 5 min read

How to Price Your Human-Made Art in an AI World

AI has compressed prices at the bottom of the creative market. But the market for provably human creative work is developing a premium. Here is how to price into it.

Protect your creative legacy

Don't let your work disappear into the noise. Get a verified human badge that holds up legally and commercially.